Artificial Intelligence (AI), once only found in science fiction, has now permeated our daily lives with breathtaking innovations, from Siri's voice on your smartphone to the advent of self-driving cars. This remarkable technology drives innovation across various sectors, from simplifying daily tasks to transforming complex business operations.

Key Takeaways

- Stay alert to the sophisticated use of AI technologies like voice cloning and deep fakes in scams.

- Spot AI-driven scams by noticing inconsistencies in audio or video messages and questioning urgent requests.

- Educate yourself about AI scam trends and update your security measures to protect against these threats.

- Share knowledge about AI scams with your network to enhance collective awareness and defense.

Yet, as AI continues to evolve, it opens new avenues for cybercriminals to exploit. In this rapidly advancing technological era, it has become increasingly crucial to understand AI's evolving nature and its potential pitfalls thoroughly.

Key Technologies Fueling Modern Imposter Scams

Voice Cloning

Voice cloning is a technology that uses AI to copy someone's voice. It involves analyzing the characteristics of a person's voice and using AI to replicate it accurately.

Cybercriminals might use voice cloning to impersonate individuals over the phone or in voice messages. This can be used in vishing (voice phishing) attacks where a victim receives a call from what sounds like a familiar voice.

For example, targeting a grandparent with a call that seems to be from their grandchild, who is in college. The voice, convincingly similar due to voice cloning technology, sounds distressed and claims they've been in an accident. They urgently request money for supposed medical and legal expenses, pleading for secrecy and quick help. The grandparent, convinced and concerned, may send money, only to later discover it was a scam exploiting their trust and bond with their family member.

Deep Fake Technology

Deepfake technology is a type of advanced AI that creates highly realistic videos that are hard to distinguish from real ones. It does this by manipulating existing video footage to make it look like someone is saying or doing things they never actually did. This technology is incredibly sophisticated, using machine learning to make the fake videos seem very convincing.

To manipulate victims, scammers can use deepfake technology to create fake videos of trusted individuals, like celebrities, politicians, or even family members. For instance, they might make a video that shows a company's CEO instructing employees to execute unauthorized financial transactions or to disclose confidential information.

AI-Generated Phishing Emails

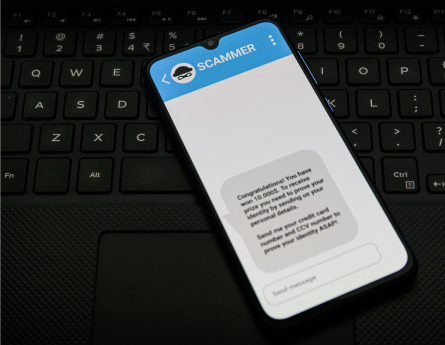

AI can be used to craft highly personalized and convincing phishing emails. By analyzing large amounts of data, AI can create messages tailored to individual recipients, increasing the likelihood of deception.

Using this technology, scammers can send out convincing phishing emails that appear to be from trusted sources, such as banks or service providers. These emails might include specific personal information, making them seem more legitimate, and can trick people into clicking on malicious links or providing sensitive information.

Spotting AI-Driven Scams and Protecting Yourself

In the face of these advanced AI-driven scams, staying informed is key to protecting yourself. Here are some crucial tips to help you recognize and guard against these sophisticated scams:

Verify the Source:

Look for Inconsistencies:

Be Skeptical of Urgent Requests for Money or Information:

Educate Yourself about AI Technologies:

Update Security Measures:

Do Not Click on Suspicious Links:

We urge you not to keep this vital information to yourself. Share this knowledge with your friends and family. The better informed we are, the less likely scammers are to succeed.

Remember, do not hesitate to contact us if you have any concerns or questions about potential scams or suspicious account activity. Our team is dedicated to ensuring your financial safety and is always here to assist you with reliable support.

Skip Navigation

Skip Navigation